Table of Contents9 sections

The valuation profession has entered a new era where the bottleneck is no longer access to information, but rather the efficient extraction and synthesis of relevant data from an overwhelming volume of financial documents. A typical mid-market M&A transaction now involves analyzing 500+ pages of financial statements, management presentations, industry reports, and regulatory filings. Natural language processing (NLP) has emerged as the critical technology enabling valuation professionals to transform this unstructured text into actionable intelligence.

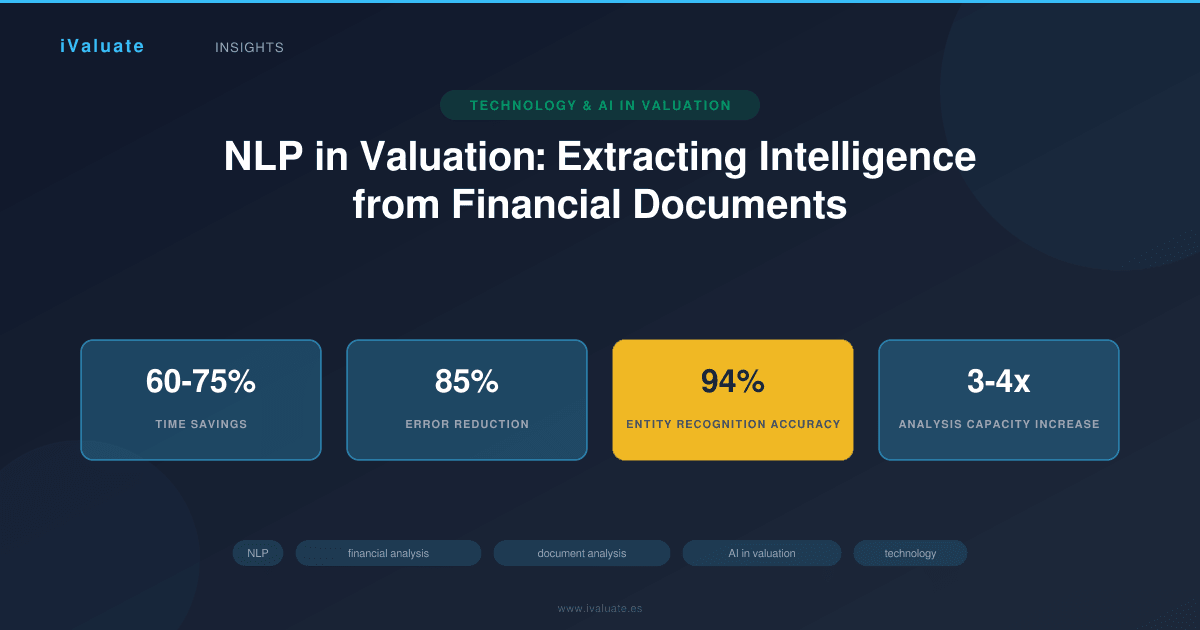

As of 2025, leading investment banks and advisory firms report that NLP-powered document analysis reduces the time spent on initial data gathering by 60-75%, while simultaneously improving the consistency and completeness of extracted information. This technological shift is not merely about efficiency—it fundamentally changes how valuation professionals allocate their expertise, moving from manual data extraction to higher-value analytical interpretation and strategic judgment.

01 The Document Analysis Challenge in Modern Valuation

Financial valuation has always been a data-intensive discipline, but the volume and complexity of relevant documentation have expanded dramatically. Consider a typical technology sector acquisition in 2025: analysts must parse through 10-K and 10-Q filings, earnings call transcripts, customer contracts, partnership agreements, intellectual property documentation, regulatory correspondence, and management presentations—often totaling thousands of pages across multiple entities and time periods.

Traditional manual review processes face several critical limitations:

- Time constraints: Senior analysts spending 15-20 hours on initial document review for a single target represents a significant opportunity cost

- Consistency issues: Different team members may extract or interpret information differently, leading to reconciliation challenges

- Cognitive fatigue: Human error rates increase substantially after reviewing dense financial text for extended periods

- Scalability problems: Comparative analyses requiring simultaneous review of multiple companies become exponentially more resource-intensive

- Version control: Tracking changes across multiple document versions and identifying material modifications proves increasingly difficult

A 2024 study by a major accounting firm found that manual document review processes missed an average of 12-18% of material information items during initial passes, requiring costly secondary reviews and reconciliation efforts. These gaps can have significant implications when they involve revenue recognition policies, off-balance-sheet obligations, or contingent liabilities.

02 How NLP Transforms Financial Document Analysis

Natural language processing applies computational linguistics and machine learning to understand, interpret, and extract meaning from human language in financial contexts. Modern NLP systems designed for valuation purposes employ several sophisticated techniques working in concert:

Named Entity Recognition (NER)

NER algorithms identify and classify specific entities within financial documents—company names, executive officers, financial metrics, dates, currencies, and legal entities. Advanced systems trained on financial corpora achieve accuracy rates exceeding 94% for standard financial entities, compared to 78-82% for general-purpose NER models.

In practice, this means an NLP system can automatically identify every mention of "EBITDA," "adjusted EBITDA," "EBITDA before non-recurring charges," and similar variations across hundreds of pages, then map these to standardized financial concepts for consistent analysis. This capability proves particularly valuable when analyzing companies that use non-standard terminology or industry-specific metrics.

Relationship Extraction

Beyond identifying individual entities, sophisticated NLP systems extract relationships between them. For example, parsing the sentence "The Company's revenue increased 23% year-over-year to $847 million, driven primarily by the enterprise software segment which grew 31% to $412 million" requires understanding multiple numerical relationships, temporal references, and causal connections.

Modern transformer-based models, particularly those fine-tuned on financial text, can extract these complex relationships with 88-91% accuracy, enabling automated population of financial models and comparative analyses. This represents a substantial improvement over rule-based systems that typically achieved only 65-70% accuracy and required extensive manual validation.

Sentiment and Tone Analysis

Valuation professionals increasingly recognize that qualitative factors—management confidence, risk disclosure tone, competitive positioning language—provide important context for quantitative metrics. NLP systems now analyze linguistic patterns to assess sentiment and identify subtle shifts in management communication.

For instance, analyzing five years of earnings call transcripts can reveal whether management's tone regarding a particular business segment has become progressively more defensive or uncertain, potentially signaling underlying operational challenges before they fully manifest in reported numbers. Research indicates that sentiment shifts in management discussion precede material financial changes by an average of 2-3 quarters in approximately 68% of cases.

Information Extraction and Structuring

Perhaps the most immediately valuable NLP application for valuation is automated extraction of specific data points and their transformation into structured formats. Modern systems can:

- Extract revenue figures by segment, geography, and product line across multiple periods

- Identify and categorize all disclosed risks, then track changes in risk language over time

- Parse complex debt structures, including covenants, maturity schedules, and interest rate terms

- Extract customer concentration data, including revenue percentages and contract terms

- Identify and categorize all non-recurring or extraordinary items affecting reported earnings

A private equity firm implementing advanced NLP for portfolio company analysis reported that automated extraction reduced the time required to build initial financial models by 68%, while simultaneously improving data accuracy as measured by subsequent auditor adjustments.

03 Real-World Applications in Valuation Practice

Case Example: Technology Sector Comparable Company Analysis

A mid-market advisory firm was engaged to value a B2B SaaS company with $180 million in annual recurring revenue. The traditional approach would involve manually reviewing 10-K filings for 15-20 comparable public companies, extracting key metrics, and normalizing for differences in reporting practices—a process typically requiring 25-30 analyst hours.

Using an NLP-powered platform, the firm automated extraction of:

- Revenue metrics (ARR, bookings, billings) with automatic reconciliation of different terminology

- Customer metrics (CAC, LTV, churn rates, net retention) even when disclosed in varying formats

- Profitability metrics with identification and categorization of all adjustments

- Growth rates across multiple time periods with automatic calculation of CAGRs

- Key operating metrics (employees, R&D spending, sales & marketing efficiency)

The NLP system completed initial extraction in approximately 90 minutes, compared to the traditional 25-30 hours. More significantly, the system identified that three comparable companies had changed their revenue recognition methodology during the analysis period—a detail that manual review had initially missed. This discovery led to more accurate normalization adjustments and ultimately affected the valuation conclusion by approximately 8%.

Case Example: Buy-Side Due Diligence

A strategic acquirer evaluating a $420 million manufacturing acquisition needed to analyze five years of financial statements, audit reports, management presentations, and board materials—approximately 3,200 pages of documentation. The timeline was compressed, with only three weeks from initial access to final bid submission.

The acquirer's corporate development team employed NLP tools to:

- Extract all mentions of customer names, contracts, and revenue relationships

- Identify every reference to capital expenditures, categorized by facility and purpose

- Parse warranty obligations and product liability disclosures across all periods

- Extract and categorize all management discussion of competitive dynamics

- Identify changes in accounting policies or estimates

The NLP analysis revealed that a single customer, mentioned inconsistently across documents using both its legal entity name and a common abbreviation, actually represented 18% of revenue—significantly higher than the 12% initially disclosed in summary materials. This discovery prompted additional commercial due diligence and ultimately resulted in a 6.5% reduction in the proposed purchase price to account for customer concentration risk.

Case Example: Portfolio Company Monitoring

A private equity firm with 23 portfolio companies implemented NLP-powered quarterly reporting analysis to identify emerging risks and opportunities across its holdings. The system analyzed quarterly board packages, management reports, and financial statements, extracting and tracking over 200 standardized metrics per company.

In one instance, the NLP system detected that three separate portfolio companies in different industries had all increased their language around supply chain challenges by 40-60% over two consecutive quarters, even though none had yet reported material financial impacts. This early warning enabled the firm to proactively work with management teams to secure alternative suppliers and hedge commodity exposures, ultimately avoiding an estimated $8-12 million in potential EBITDA impact across the three companies.

04 Technical Architecture and Implementation Considerations

Implementing effective NLP for financial document analysis requires understanding both the underlying technology and the specific requirements of valuation workflows.

Model Selection and Training

Generic NLP models trained on general text corpora perform poorly on financial documents, which contain specialized terminology, complex numerical relationships, and domain-specific context. Leading implementations use one of two approaches:

Fine-tuned transformer models: Starting with large language models like BERT, FinBERT, or GPT-based architectures, then fine-tuning on large corpora of financial documents. These models typically achieve 15-20 percentage points higher accuracy on financial entity recognition and relationship extraction compared to general-purpose models. Training datasets often include 50,000+ annotated financial documents spanning multiple industries and document types.

Hybrid approaches: Combining rule-based systems for highly structured information (like financial statement line items) with machine learning models for unstructured text analysis. This approach often proves more practical for mid-sized firms, as it requires less training data and computational resources while still delivering 85-90% of the accuracy improvement of pure ML approaches.

Data Quality and Preprocessing

Financial documents present unique challenges for NLP systems:

- PDF complexity: Many financial documents are scanned PDFs or contain complex tables, footnotes, and multi-column layouts that require sophisticated optical character recognition (OCR) and layout analysis

- Numerical precision: Unlike general text analysis, financial NLP requires perfect accuracy in numerical extraction—confusing $1.2 million with $12 million is unacceptable

- Context dependency: The meaning of terms like "adjusted" or "normalized" varies significantly across companies and requires contextual understanding

- Temporal references: Correctly mapping phrases like "prior year," "same quarter last year," or "trailing twelve months" to specific dates is critical

Professional-grade implementations invest heavily in preprocessing pipelines that clean, structure, and validate extracted data before it enters analytical workflows. These pipelines typically include multiple validation layers and confidence scoring for each extracted data point.

Integration with Valuation Workflows

The most successful NLP implementations don't operate in isolation but integrate seamlessly with existing valuation tools and processes. Key integration points include:

- Financial modeling platforms: Direct population of model inputs with full audit trails showing source documents and extraction confidence

- Comparable company databases: Automated updates of peer metrics with change tracking and anomaly detection

- Due diligence checklists: Automatic flagging of completed items and identification of missing information

- Report generation: Automated creation of summary tables and exhibits with source citations

Platforms like iValuate have begun incorporating NLP capabilities that allow professionals to upload financial documents and automatically populate valuation models, while maintaining full transparency into data sources and extraction logic. This integration approach ensures that technology augments rather than replaces professional judgment.

05 Accuracy, Validation, and Professional Responsibility

While NLP technology has achieved impressive accuracy rates, valuation professionals must maintain appropriate skepticism and validation protocols. Current best practices include:

Confidence Scoring and Human Review

Advanced NLP systems provide confidence scores for each extracted data point, typically ranging from 0-100%. Leading firms establish threshold policies—for example, automatically accepting extractions with >95% confidence, flagging 85-95% confidence items for spot-checking, and requiring full human review for <85% confidence extractions.

A 2025 industry survey found that firms using confidence-based review protocols achieved 99.2% accuracy in final analyses while reducing total review time by 58% compared to comprehensive manual review.

Cross-Validation and Reconciliation

Professional standards require that critical valuation inputs be validated through multiple sources. NLP systems can facilitate this by automatically identifying discrepancies between different documents or time periods. For example, if revenue figures extracted from a 10-K don't reconcile with investor presentation materials, the system should flag this inconsistency for investigation.

Audit Trails and Documentation

Regulatory and professional standards require clear documentation of data sources and analytical processes. NLP implementations must maintain complete audit trails showing:

- Source documents for each extracted data point

- Specific page numbers and text passages

- Extraction confidence scores and any manual overrides

- Version history of source documents

- Validation checks performed and results

This documentation proves particularly important in litigation contexts or regulatory reviews, where the ability to trace every number in a valuation back to source documents may be required.

06 Emerging Capabilities and Future Directions

NLP technology for financial analysis continues to evolve rapidly. Several emerging capabilities are beginning to impact valuation practice:

Multi-Modal Analysis

Next-generation systems analyze not just text but also charts, graphs, and images within financial documents. For example, extracting data directly from revenue trend charts or organizational diagrams, or analyzing product images in investor presentations to assess competitive positioning. Early implementations show that multi-modal analysis can extract 15-20% more relevant information than text-only approaches.

Comparative Temporal Analysis

Advanced systems now automatically track how specific language, metrics, and disclosures change over time, identifying subtle shifts that may signal emerging trends. For instance, tracking how management's discussion of a particular market opportunity evolves across eight quarters of earnings calls, or how risk factor language changes following specific events.

Cross-Document Synthesis

Rather than analyzing documents in isolation, emerging systems synthesize information across multiple sources to build comprehensive understanding. For example, combining information from financial statements, earnings calls, analyst reports, and news articles to develop a holistic view of a company's competitive position and growth trajectory.

Predictive Analytics

Some firms are beginning to use NLP-extracted features as inputs to predictive models. For example, using patterns in management language, disclosure changes, and risk factor evolution to predict future financial performance or identify companies likely to face operational challenges. While still experimental, early results suggest that linguistic features can add 8-12% to the predictive power of purely quantitative models.

07 Implementation Roadmap for Valuation Practices

For firms considering implementing NLP capabilities, a phased approach typically proves most successful:

Phase 1 (Months 1-3): Begin with simple, high-value use cases like automated extraction of standard financial metrics from 10-K filings for comparable company analyses. This builds confidence and demonstrates ROI while requiring minimal process changes.

Phase 2 (Months 4-6): Expand to more complex documents like earnings call transcripts and investor presentations. Develop standardized validation protocols and integrate with existing financial modeling workflows.

Phase 3 (Months 7-12): Implement advanced capabilities like sentiment analysis, temporal tracking, and cross-document synthesis. Begin using NLP for due diligence and portfolio monitoring applications.

Phase 4 (Ongoing): Continuously refine models based on user feedback, expand to new document types and use cases, and integrate emerging capabilities as they mature.

Successful implementation requires not just technology deployment but also change management, training, and evolution of quality control processes. Firms that treat NLP as a tool to augment professional expertise rather than replace it consistently achieve better outcomes than those pursuing full automation.

08 Economic Impact and ROI Considerations

The investment required for professional-grade NLP capabilities varies widely based on firm size and implementation approach. Enterprise implementations at major investment banks may involve $500,000-$2 million in initial investment plus ongoing costs, while mid-market firms can access cloud-based platforms for $2,000-$10,000 monthly depending on usage volume.

ROI typically manifests in several areas:

- Time savings: 60-75% reduction in document review time, freeing senior professionals for higher-value analysis

- Accuracy improvements: 40-60% reduction in data extraction errors requiring correction

- Capacity expansion: Ability to analyze 3-4x more comparable companies or conduct more thorough due diligence within existing timelines

- Risk reduction: Earlier identification of material issues or inconsistencies that might otherwise be missed

- Competitive advantage: Faster turnaround times and more comprehensive analyses in competitive bid situations

A mid-market M&A advisory firm reported that NLP implementation enabled them to increase their average number of comparable companies analyzed from 12 to 35, while reducing total analysis time by 40%. This more comprehensive peer analysis contributed to more defensible valuation conclusions and improved client satisfaction scores.

09 Looking Forward: The Evolving Role of Valuation Professionals

The integration of NLP into valuation practice doesn't diminish the importance of human expertise—it elevates it. As routine data extraction and initial analysis become increasingly automated, valuation professionals can focus on the aspects of their work that truly require human judgment: interpreting complex situations, assessing qualitative factors, navigating ambiguous scenarios, and providing strategic counsel to clients.

The most successful valuation practices in 2025 and beyond will be those that effectively combine technological capabilities with deep professional expertise. NLP handles the time-consuming work of extracting and organizing information from thousands of pages of documents, while experienced professionals apply their judgment to interpret that information, assess its implications, and develop defensible valuation conclusions.

This evolution parallels earlier technological transitions in the profession—from manual calculations to spreadsheets, from paper documents to digital files, from static models to dynamic analytical platforms. Each transition initially generated concern about automation replacing professionals, but ultimately enabled the profession to tackle more complex problems and deliver greater value to clients.

As NLP capabilities continue to advance, tools like iValuate are integrating these technologies in ways that enhance rather than replace professional judgment, enabling valuation experts to work more efficiently while maintaining the rigor and skepticism that the profession demands. The future belongs to professionals who embrace these tools while continuing to develop the analytical skills, industry knowledge, and professional judgment that technology cannot replicate.